Digital civility and the future of human interactivity

Opinion piece and thesis on the value of content moderation within gaming and other digital communities...

Hey there! I’m Jordan Pascasio, Investor at Next Ventures.

Every quarter I write a piece encompassing whole person health. If you would like to receive it directly in your inbox, subscribe now.

Connected platforms built on top of peer-to-peer value exchanges allowed for shared digital experiences to be born across a variety of industries such as gaming (Roblox) and social media (Instagram). However, as shared digital experiences continue to grow and evolve, so does the presence of bad actors within them. As more users are able to socialize and interact in ‘parallel universes’ with self-contained immersive communities, the enforcement of digital civility becomes an operational necessity. Digital civility not only protects users from the risk of sextortion and harassment, but also allows shared digital experiences to scale and to better engage users, ultimately leading to better monetization. In this piece, I’ll focus on the gaming industry’s most ‘hair on fire’ use cases for digital civility infrastructure, identify which companies are best positioned to service the demand and explore horizontal use cases that can unlock whitespace opportunities and scale for said companies.

Digital civility within gaming

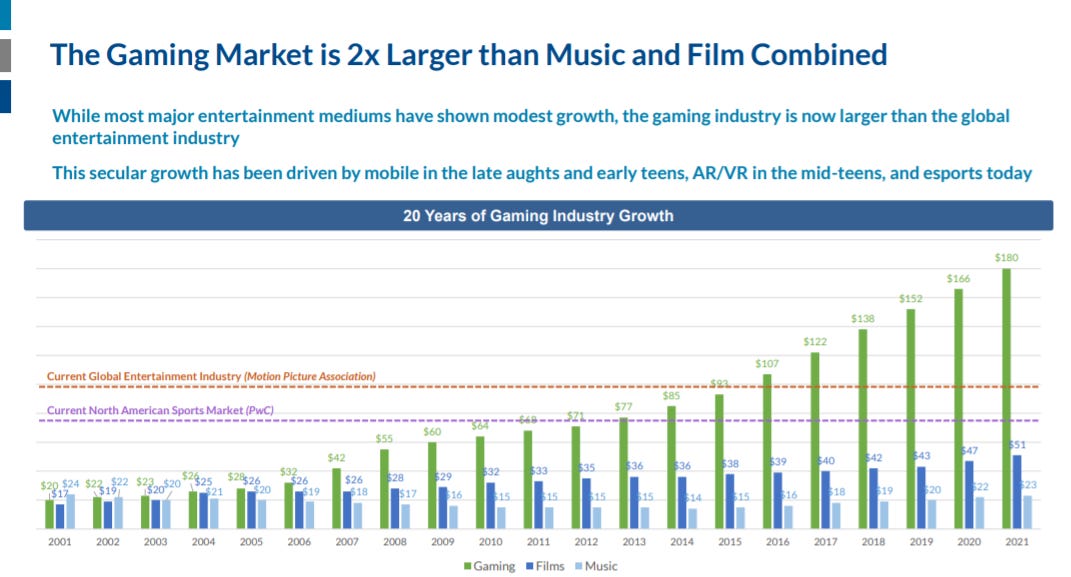

2.7B gamers around the world spent over $150B in 2020, eclipsing the revenue generated from both music and film combined. By 2023, Newzoo forecasts the global games market to grow with a +8.3% CAGR to exceed $200B.

More importantly, gaming experiences have become the de facto venue for social interactivity and culture for digital natives, who prefer to hang out with friends within the digital realms of Fortnite, Roblox or Animal Crossing over IRL. With 87% of Gen Z playing video games at least weekly, gaming is the favorite media and entertainment activity amongst the generation, trumping other activities such as watching TV at home, listening to music, browsing the internet and engaging on social platforms1. The high penetration rate will only increase as the proliferation of massively multiplayer online (MMO) games and shared digital experiences open up gateways to the proverbial metaverse.

While gaming’s exponential growth presents exciting new opportunities for the broader media & entertainment space, it also presents new, expansive venues for bad actors to target, leaving younger generations vulnerable to malicious activity. As a result, sextortion and harassment occurrences in gaming are climbing rapidly, prompting the need for more public exposure around these concerning developments in order to protect players of all ages. Let’s take a look at these two core issues plaguing games today as well as their negative implications for game publishers.

Sextortion

The sheer scale of virtual communities within MMOs such as Roblox (42M DAUs) or Fortnite (25M DAUs) offer online predators a bountiful starting point for malicious schemes. This is exacerbated by the fact that these virtual communities are becoming more than just a place to play video games. While most parents think that Fortnite and Roblox consist of a series of ephemeral game sessions with limited social interactions, they fail to realize that these games are expanding their player experiences to function more like social networks disguised as games. Here are a few examples:

Fortnite’s Party Royale is focused on “partying, not fighting”, hosting events such as Travis Scott and Marshmello virtual concerts

Roblox and Warner Bros. Pictures recently hosted a launch party for the movie In the Heights. Developers created an immersive experience that simulated the Washington Heights neighborhood from the film so that players could attend a launch party for the film in the virtual space

Nintendo’s Animal Crossing is a social simulation game in which you build your own island and invite friends and strangers to come explore it, engaging in activities such as attending virtual birthday parties, fishing and shopping

These immersive, avatar-centric experiences centered around socialization provide a new-aged sense of community for players, but also double as hunting grounds for predators. Most abusive relationships start in gaming experiences where a predator initially builds friendships by sending in-game currency as a way to gain their target’s trust. Under the guise of a new friend, the predator then transitions to platforms such as Facebook Messenger and Kik where they can communicate more privately, asking victims for explicit images or videos. Once attained, the ‘friend’ threatens to send the explicit images or videos to the victim’s friends and family unless the victim sends them cash or continues to send them more explicit content. According to a review of prosecutions, court records, law enforcement reports and academic studies, some perpetrators are grooming hundreds and even thousands of victims. In 2020, there were at least four sextortion incidents in the UK where young men who were targeted saw no other way out than to take their own lives2.

Harassment & cyberbullying

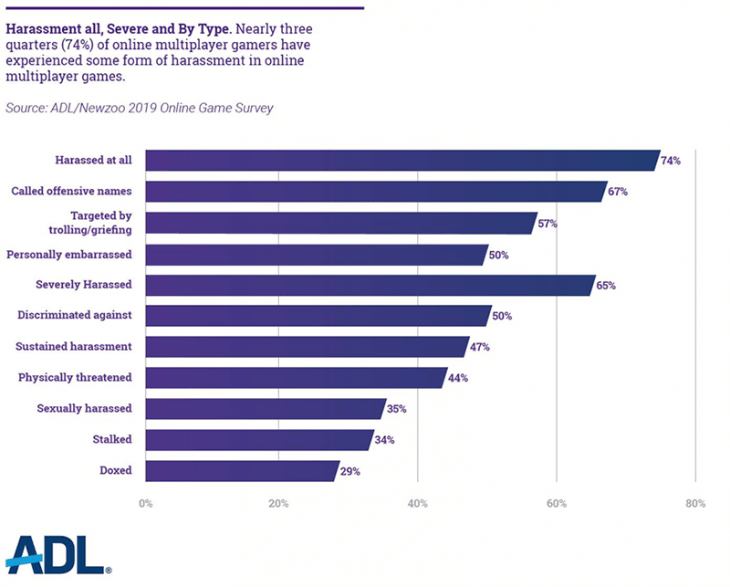

The prevalence of harassment and cyberbullying within gaming continues to be a huge issue. An extensive study conducted by ADL revealed that nearly three quarters (74%) of online multiplayer gamers have experienced some form of harassment in online multiplayer games.

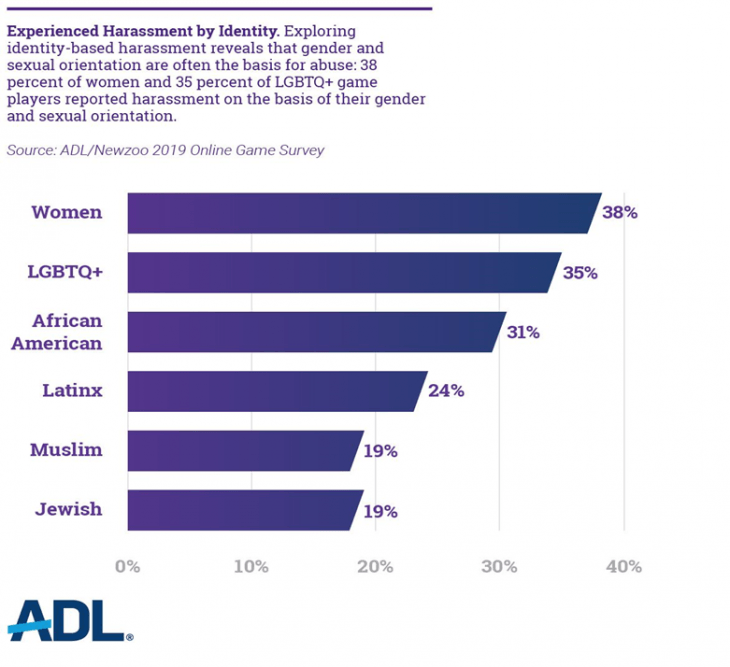

While some of this harassment may be related to player skill level and immaturity, 53% of online multiplayer gamers believed they had been targeted at some point based on their race/ethnicity, religion, ability, gender or sexual orientation. This particular form of harassment is absolutely reprehensible.

For younger players, video games often serve as an escape from bullying in the real world. However, as harassment and cyberbullying within games increases, victims may feel they can never get away from it, often leading to depression, anxiety and long-term damage to self-esteem.

Game Performance

On the game publisher side, harassment and cyberbullying create significant headwinds to a game’s success.

“In terms of retention, we see how long people play the game for, and how much they’re engaged. If they’re frustrated or unhappy, or… they don’t feel safe, they won’t stay. They will leave your game, and you will lose players”

- Elise Lemaire, Director of Operations, Rovio (makers of Angry Birds)3

The aforementioned ADL study found that 19% of players actually quit playing certain games due to harassment. Given the negative implications that churn has on user retention, revenue and scalability, it’s imperative that studios and publishers prioritize investing in content moderation.

The solution

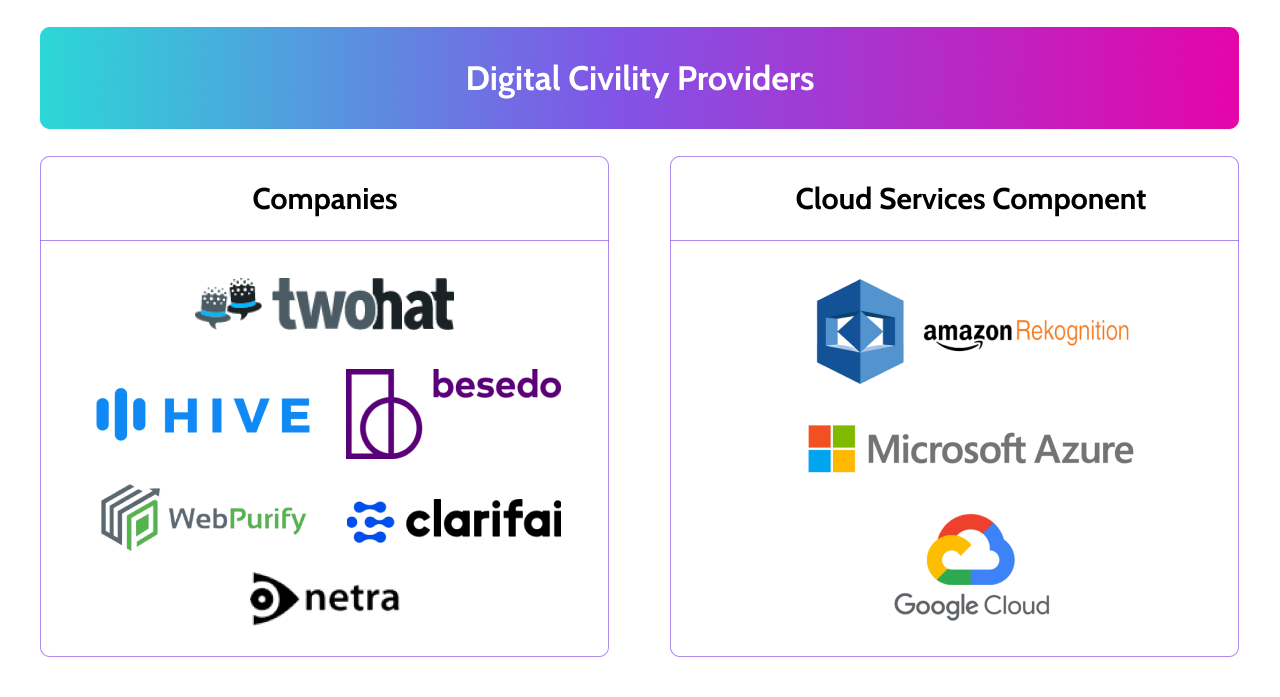

There are a handful of companies and services that are poised to provide digital civility solutions to the increasing number of shared digital experiences within gaming and other industries. Facilitators of shared digital experiences must realize that the days of content moderation as a cost center are over. In today’s modern, community-driven world, content moderation is an investment into a product’s future success, offering value through enhanced mitigation and monetization.

Mitigating the bad 🚫

The value of mitigating sextortion and harassment within shared digital experiences is straight forward. Fostering a more welcoming and positive user experience by expeditiously ridding of bad actors benefits player retention and LTVs and enables platform scalability.

Today’s digital civility companies are blazing a trail of innovation within content moderation by leveraging AI and deep learning models to filter interactions and to detect any signs of sextortion or harassment. Content from interactions, which occur in multiple languages and across various mediums, are passed through a digital civility company’s API for real-time analysis, allowing acceptable content to pass through or flagging potentially toxic content by severity for review. These companies also make it easy to produce customized content moderation frameworks for each client to ensure product- or game-specific lingo isn’t filtered out. As one can imagine, the number of interactions across gaming experiences is enormous. In theory, each incremental interaction processed should deepen the trenches of a digital civility provider’s data moat and increase recognition performance.

To ensure scalability, some digital civility companies are also providing custom neural networks that are trained to triage user generated reports. Historically, company moderation teams review these user generated reports by hand, compromising efficiency and attentiveness to serious issues such as threats, self-harm or sexual abuse. Now, trained AI models help to automate a team’s most consistent decisions for time-consuming tasks like closing false reports, allowing for the prioritization of truly malicious reports that require human review.

Leveraging the good 📈

According to a recent study that analyzed a variety of product experiences containing chat features, 82% of interactions were positive, while only about 3% were harmful. The remaining 15% of chat was nuanced and fell somewhere in between. It’s also worth noting that these percentages stayed relatively consistent regardless of spikes in chat volume. The obvious takeaway here is that companies should work to completely eradicate harmful or potentially-harmful behavior, which we covered in the previous section. However, the hidden gem is actually in the handling of the positive behavioral data.

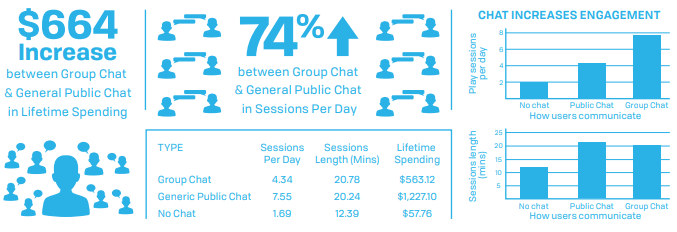

Community chat within shared digital experiences is a proven enhancement to monetization. For instance, within gaming experiences, chat participation increases daily sessions more than 4x and session length by 60%. Moreover, the LTV of players who chat is 10x to 20x higher than those who don’t.

Leveraging content moderation infrastructure can help product teams discover what their most valuable, long-term users are talking about and then use those insights to catalyze the same conversations and behavior amongst newer or less engaged users. This is especially crucial for the world of gaming, which primarily leverages a digital commerce-driven revenue model (e.g., microtransactions, tipping) that benefits from users interacting with each other and sticking around.

This brings me to my core thesis:

Suppliers of scalable, automated content moderation infrastructure that not only filters out malicious behavior, but also intelligently catalyzes user engagement provide an operational necessity to facilitators of shared digital experiences.

Here are the companies that I believe are best positioned to compete within the digital civility space:

I view Two Hat as the market leader in digital civility solutions, exemplified by blue chip gaming customers such as Epic Games, Roblox, Supercell, Activision and others who all are collectively driving the future of shared digital experiences forward. Across its impressive customer base and differentiated suite of products, Two Hat helps classify and escalate more than 100 billion human interactions in real-time every month - six times the monthly volume of Twitter!

10x Path: Horizontal use cases for digital civility

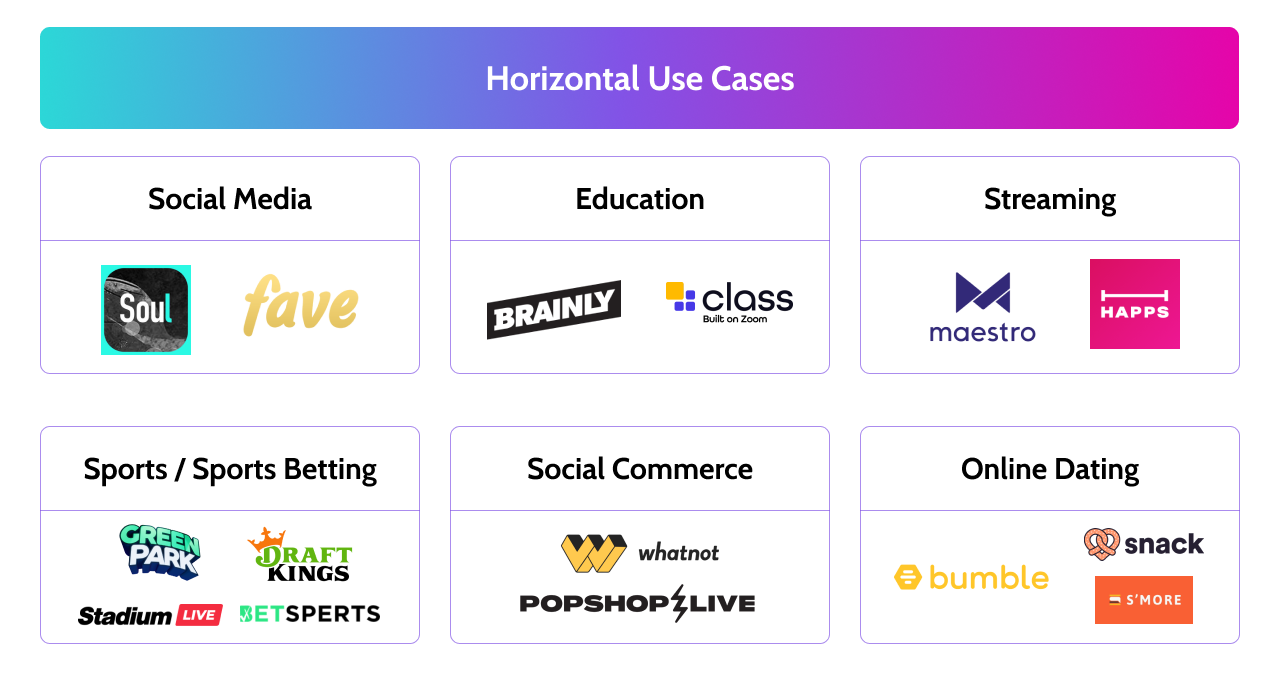

While gaming represents the industry with some of the most pressing content moderation needs, horizontal use cases within social media and other digital communities provide an incredibly large TAM to sell digital civility infrastructure into - not to mention expansion to support additional regions and languages. Let’s explore the social media use case in more detail and then outline additional whitespace opportunities.

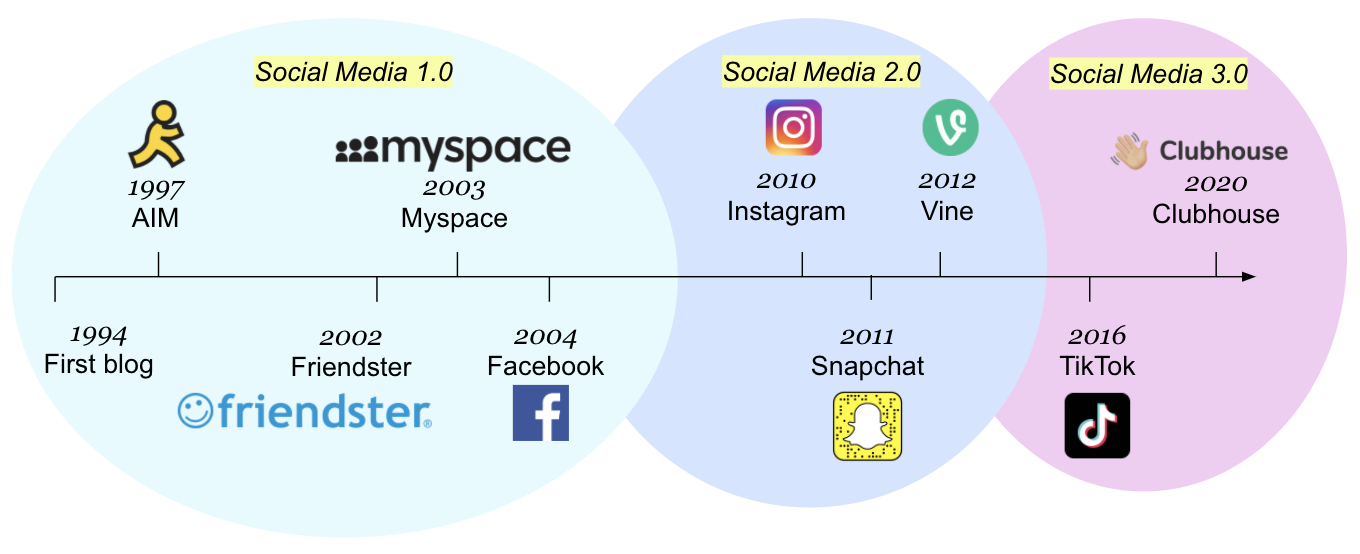

I’m going to borrow a few concepts from investors Tasha Kim and Rex Woodbury who do an excellent job covering culture and community within the consumer internet space. Tasha recently published an article detailing how we’ve entered the era of Social Media 3.0. Social Media 3.0 differs from Social Media 2.0 in that the former is characterized by an 100% immersive experience that influences IRL culture through creation, while the latter simply mirrors real world events. For example, Social Media 2.0 apps like Instagram facilitate venues to display clout, while Social Media 3.0 apps like TikTok or Clubhouse serve as a virtual ‘third place’ that fosters connection, community and shared interest.

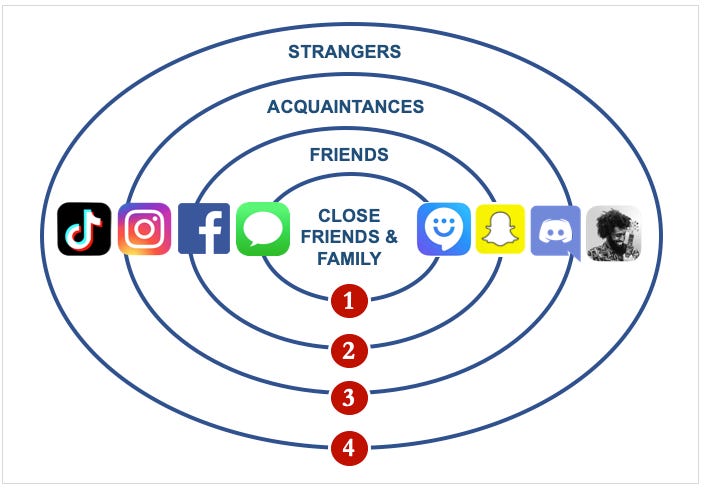

To help crystallize this a bit more, Rex pairs Tasha’s framework with the helpful graphic below to depict the varying realms of interactivity and inclusion for each step of social media’s evolution.

The last decade (i.e., Social Media 2.0) is best represented by rings 2 and 3 in which platforms functioned as extensions of IRL. In other words, more clout = more engagement amongst friends and acquaintances, which doesn’t truly facilitate an immersive and *authentic* community. The next decade (i.e., Social media 3.0) will be represented by rings 1 and 4. Here’s Rex’s take:

“While Ring 1 platforms like WhatsApp and iMessage will benefit from our desire for intimate connections, the Ring 4 platforms are most interesting. These are the platforms that connect us with strangers and that create communities around us that we didn’t even know we wanted to be a part of.”

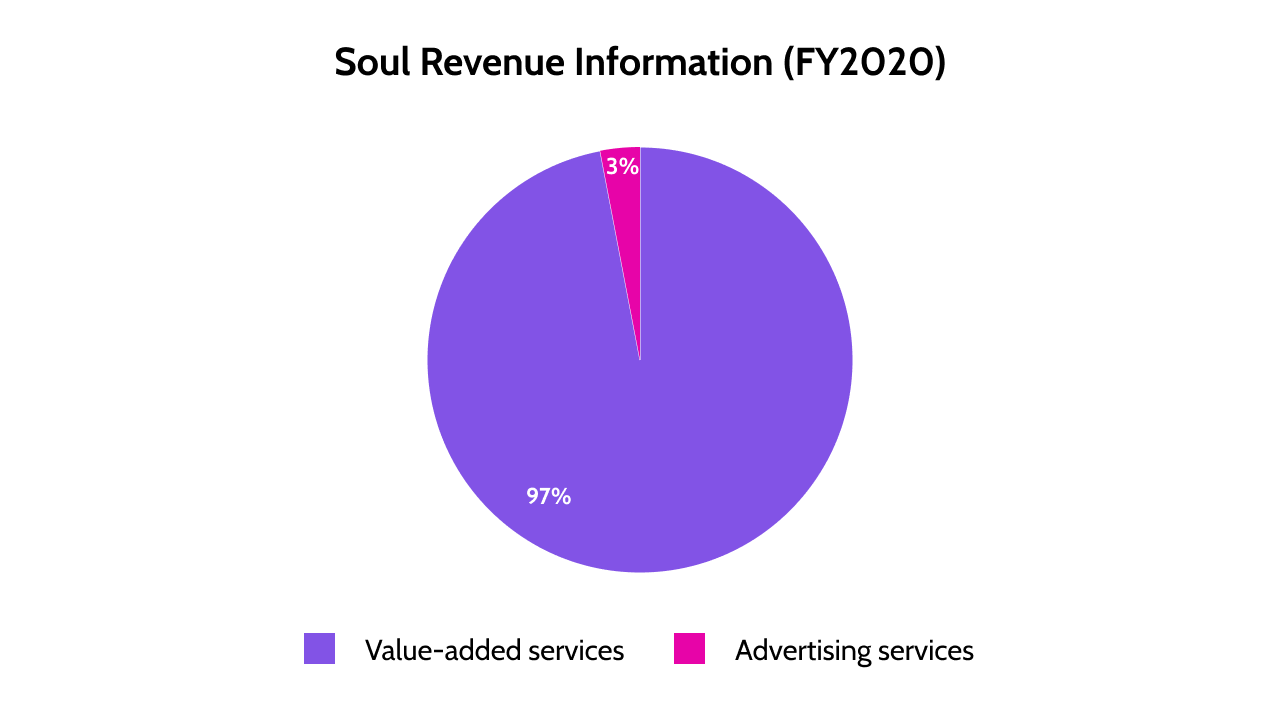

The shift in social media platform design also comes with the shift in monetization models, transitioning from digital advertising to digital commerce. Soul, China’s 5th most popular social app and a leading indicator for how Social Media 3.0 apps may look and feel in the future, provides a great case study. In its recent F-1 filing to go public on the Nasdaq, Soul describes itself as an algorithm-driven online social playground where players’ avatars can hang out on ‘planets’ built around shared interests. Notably, the company makes 97% of its revenue ($104M over the last 12 months) from ‘value-added services’, which are comprised of virtual items and membership subscriptions. In other words, they implement a take-rate on microtransactions for things like avatar upgrades, sending virtual gifts to friends and access to members-only virtual items and enhanced social networking functionalities.

While the increase in authenticity and shared interest in Social Media 3.0 should, in theory, increase digital civility, content moderation infrastructure still remains an operational necessity for experiences that facilitate the ability to socialize and interact in ‘parallel universes’ with self-contained immersive communities comprised of strangers. Moreover, as we start to see more social media experiences utilize digital commerce-based revenue models in the West, the importance of using content moderation to enhance engagement and monetization cannot be overstated.

Beyond social media, I caught up with Two Hat Founder & Executive Chairman Chris Priebe to get his thoughts on additional whitespace opportunities for digital civility infrastructure. Chris told me that they’re very keen on the education space given its forced, rapid digitization due to the pandemic and given schools’ zero-tolerance policy for bullying. He also highlighted streaming experiences as an exciting opportunity as well as adding audio/voice to Two Hat’s content moderation capabilities - a format that has long been difficult to solve for.

Taking this into account, I’ve combined Chris’s thoughts with my own to quantify the landscape of horizontal use cases for digital civility infrastructure outside of gaming.

Risks

The moderation of billions of user interactions doesn't exactly align well with the recent push to privatize user data. News stories exposing security breaches at major retailers and social media giants selling user data have cultivated feelings of data insecurity amongst consumers. According to a Pew Research Center survey, 93% of internet users want more control over their data and information. However, when it comes to ensuring safety against sextortion, harassment and self-harm, which side gives? When I posed this question to Chris Priebe, he explained that Two Hat is in the ‘safety trumps privacy’ camp, stating:

“It’s not too dissimilar to the framework of free speech. One is entitled to free speech as long as it doesn’t endanger or infringe upon other peoples’ rights and safety. For example, you can’t just yell “bomb” in an airport”

He also went on to liken the presence of content moderation within games to that of a bouncer at a club:

“That’s why people have bouncers at the front door. Somehow with games, we don’t think we need to put bouncers at the front door, and we wonder why things go so terribly wrong.”

Final Thoughts

If you’re bullish on the future growth of shared digital experiences within gaming, social media, education, streaming and other digital communities, digital civility infrastructure provides diversified exposure that will grow in lockstep with the growth across all of the aforementioned industries. Whether it’s used to mitigate malicious behavior such as sextortion and harassment or to enhance monetization by intelligently inspiring users to engage with each other and stick around, digital civility is an operational necessity for the facilitators of the shared digital experiences that will define our future.

https://www2.deloitte.com/us/en/insights/industry/technology/digital-media-trends-consumption-habits-survey/summary.html

https://www.thestar.com.my/aseanplus/aseanplus-news/2021/06/12/for-lust-and-money-when-online-sexual-encounters-end-in-despair-and-death

https://www.twohat.com/solutions/case-studies/case-study-rovio/