Alternative Data as Novel Digital Biomarkers

A look at how passively collected user & device data + AI can be used to craft more longitudinal care models.

Hey there! I’m Jordan Pascasio, Investor at Next Ventures.

Every month I write a piece encompassing whole person health. If you would like to receive it directly in your inbox, subscribe now.

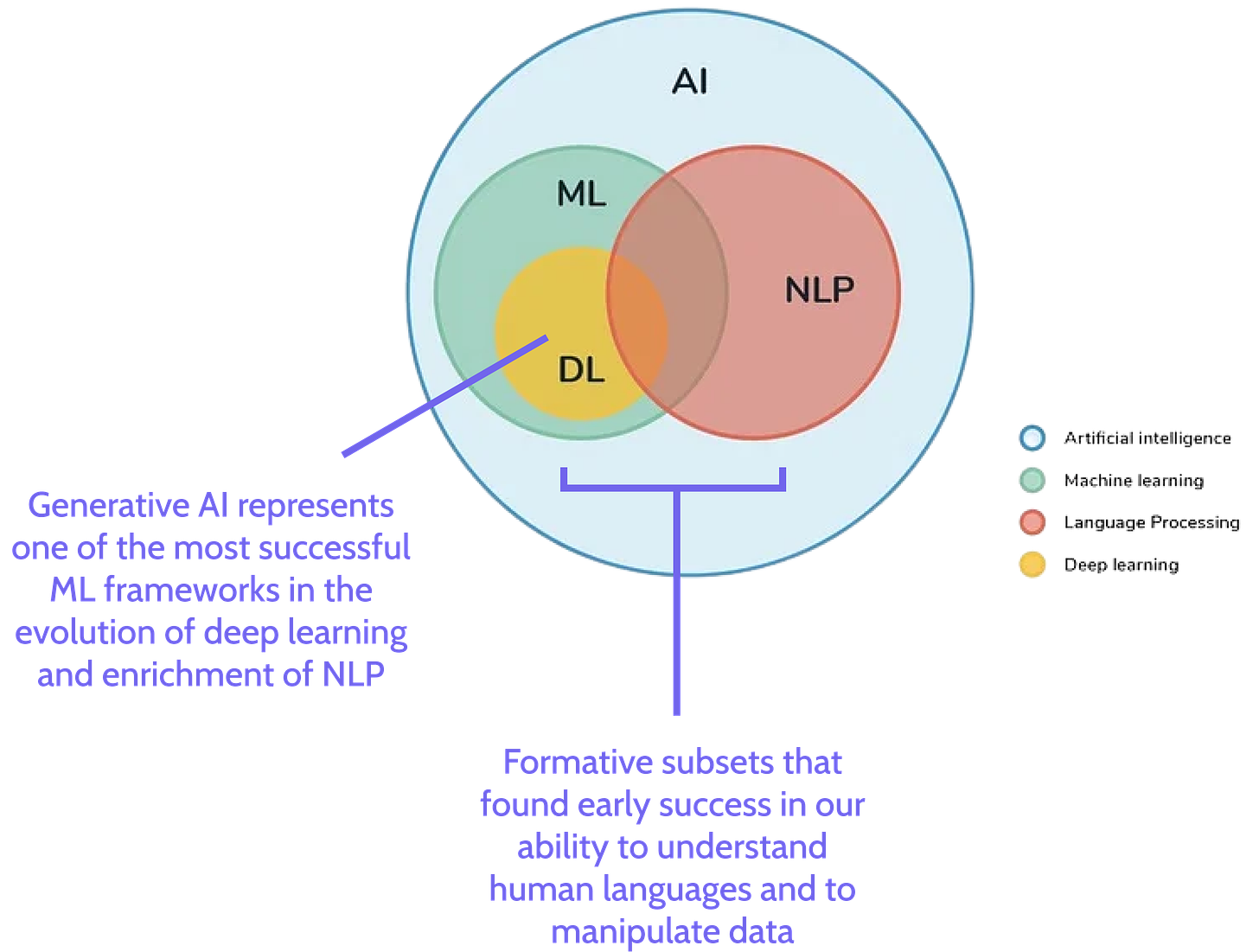

Recent speculation around the use of new, revolutionary technology within healthcare has been rightfully centered around generative AI. Large Language Models (LLMs) like OpenAI’s GPT-3.5 is all the rage, potentially capable of reimagining a multitude of workflows such as bespoke care plan creation, clinical decision support or the streamlining of administrative tasks. Some early adopters have even made the case for LLM-powered chatbots to be alternate providers of talk therapy, but let’s not get too ahead of ourselves here. We’re still a ways away from any piece of technology passing the Turing test (I hope).

And yet, while generative AI still has one foot firmly in the “toy” phase, non-deep learning applications of formative AI subsets such as natural language processing (NLP) and machine learning (ML) are emerging to provide novel use cases within care delivery.

Background: AI-based Manipulation of User Data

Companies across a handful of verticals have leveraged NLP and ML (without the deep learning aspect) to manipulate user & device data for years, helping to glean mission critical insights and to affect future behaviors of users.

For example, in gaming, automated content moderation is essential for sustaining digital civility amongst players. In digital advertising, ML algorithms underpin the ad platforms of social media companies like Meta, serving contextually relevant ads to users with scary accuracy - so much so that it prompted app store facilitators like Apple to crack down on advertisers’ access to user level data and attribution.

And now within healthcare, we’re starting to see user & device data + AI used to produce novel digital biomarkers, affording providers more longitudinal context when delivering care. However, in an increasingly privatized world, the question remains:

How should we think about the ethics of accessing user & device data when it’s used to improve the quality of care?

User & Device Data As Novel Digital Biomarkers

In terms of user & device data adoption within healthcare, digital phenotyping within behavioral health (BH) has seen the most traction thus far. Digital phenotyping is a novel computational approach that relies on the real-time quantification of human behavior through continuous monitoring of digital biomarkers.1

By now, you’re probably wondering what exactly user & device data consists of. Mobile devices offer a multitude of novel digital biomarkers such as:

Societal interactions: voice tone detection, number of messages sent, language within virtual engagements

Digital interactions: access to certain apps or sites

Device usage: phone locks/unlocks, screen time

All of which can be collected in a passive and continuous manner.

This data can then be used in conjunction with traditional digital biomarker data (e.g., heart rate) to help more accurately identify BH condition severity (e.g. depression, anxiety) or to identify and mitigate negative events (e.g., ED utilization, relapse) upstream.

After all, if Instagram can use passively collected user data to serve you your next purchase before you’ve even had time to contemplate what you need next, there’s no reason why providers shouldn’t be able to use a similar, AI-based approach to better identify illness or reduce ED utilization. The importance of the passive and continuous collection method cannot be overstated given historical biases in self-reporting and the lack of time in primary care settings.

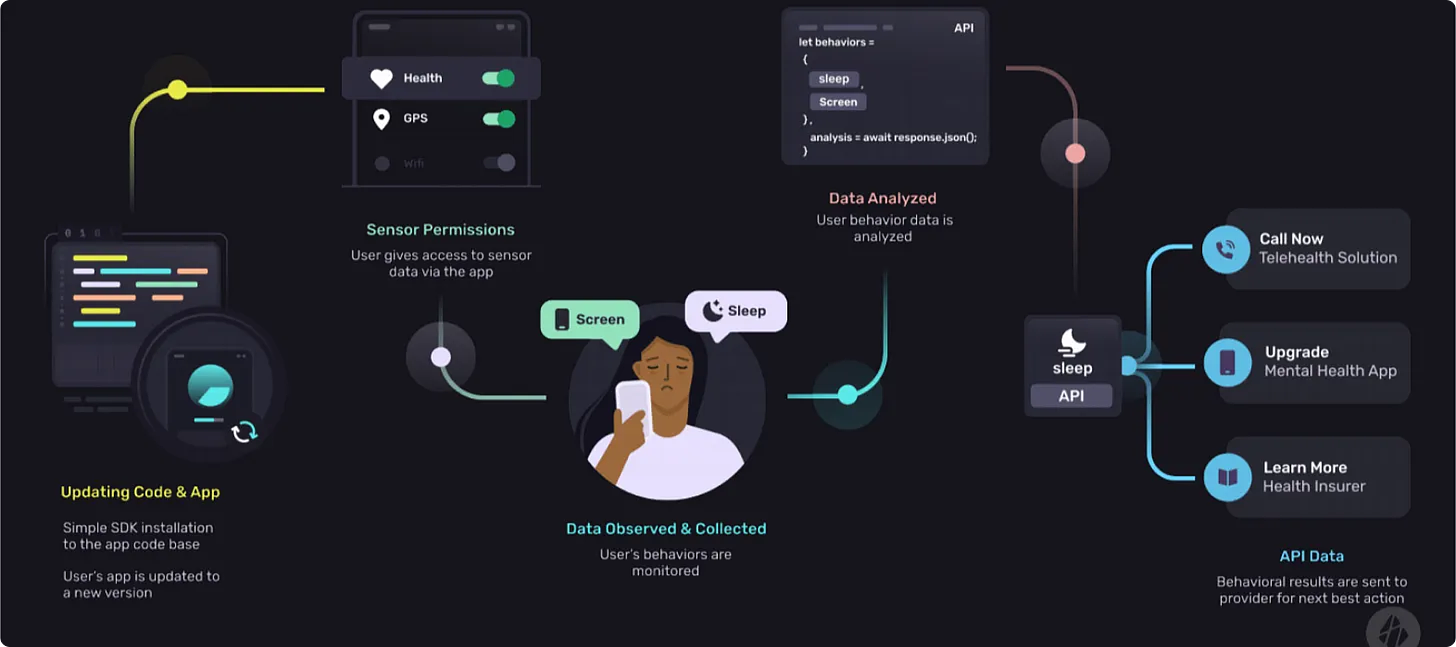

Below is a non-exhaustive overview of companies that leverage passively collected user & device data + AI to help providers craft more longitudinal BH care models. It’s important to note that this data can only be utilized after receiving patient opt-in.

Behavidence & Sahha

Behavidence & Sahha offer API-first infrastructure (B2B) that leverages nonintrusive user & device data as an alternate way to assess the severity of conditions such as depression and anxiety. The accuracy of the companies’ algorithms is predicated on regression models that fit passively collected digital biomarkers with self-reported PHQ-9 and GAD-7 assessments.

Novel and traditional digital biomarkers such as phone unlocks/locks, app usage, steps and sleep are used across both companies’ models with 75+% accuracy (so far) in diagnosing depression and anxiety relative to the self-reported assessment scales.

In theory, using integrative device data alongside traditional care models can afford providers continuous and passive monitoring in between appointments and reduce vulnerability to the patient's state of mind and biased perception of symptoms.

Marigold Health

Marigold Health is an anonymous social network in which people with substance use and/or mental health conditions support each other. Think AA or NA 2.0 that taps into the secular tailwinds of virtual third places. In other words, the company leverages shared experiences and interests to provide empathetic support and to drive better health outcomes.

I’m a huge fan of capital light partnership / enablement models that help providers drive better outcomes and/or get closer to the premium dollar. In this approach, Marigold’s use of digital community as wraparound support to providers (e.g., OTPs) is an elegant way to garner contextual patient data, decrease utilization and ultimately reduce spend.

But wait…what about AI? Marigold’s NLP technology passively analyzes group engagement to flag relevant clinical and nonclinical needs, triggering “alerts” that can be sent to care providers.

This approach is similar to content moderation applications in gaming in which NLP is used to triage player engagements and proactively mitigate malicious behavior such as bullying or sextortion.

In Marigold’s case, the company’s NLP is passively monitoring emotional sentiment in messages for indications of relapse or self-harm and implementing upstream interventions if identified.

Notably, the company has produced impressive outcomes (e.g., reduced inpatient and rescue care) in its Medicaid MCO partnerships, providing substantial cost savings across substance use disorder (SUD) populations that have increasingly burdened payers.

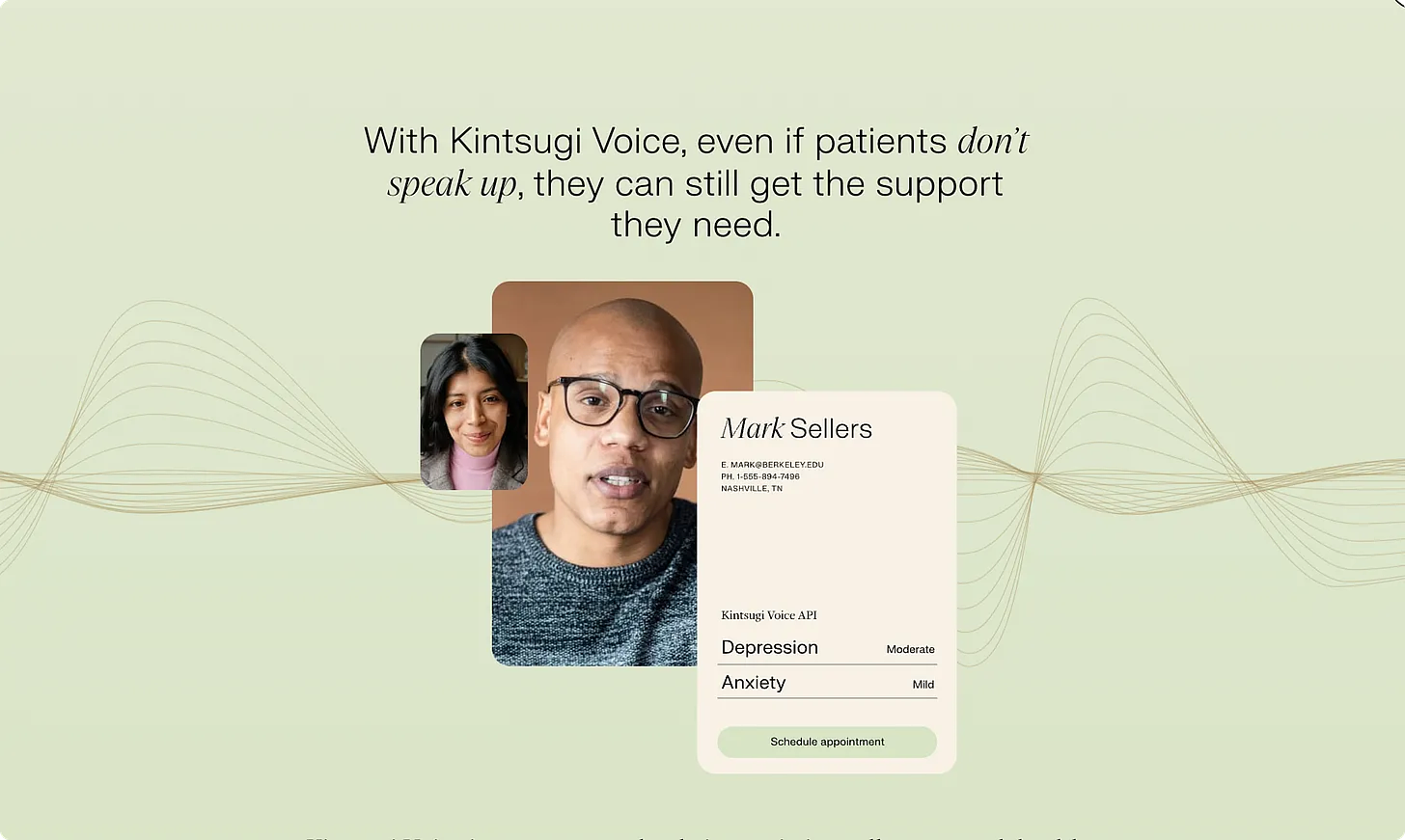

Kintsugi

The Kintsugi team has received a number of accolades and distinctions for their AI technology, Kintsugi Voice, which detects signs of clinical depression and anxiety in free-form speech.

Kintsugi Voice is an API-first infrastructure platform that can be integrated into existing call centers, telehealth care models and patient monitoring apps to quickly detect symptoms of BH disorders in real-time - and in a passive and objective manner.

The solution serves a multitude of use cases across payers (e.g., RAF scoring) and providers (e.g., early detection, close care gaps) to ultimately empower care delivery, drive lower costs and improve patient outcomes.

Ethical Considerations for Novel Digital Biomarkers

Tying back to the question posed in the introduction, we must take a view on where the collection and analysis of user data for provider enablement falls on the ethics scale.

After talking to a handful of founders building in this nascent space, I found the following framework to be helpful in encapsulating their views on this issue, which I tend to agree with:

If the user & device data is benefiting the corporation/provider more than the user/patient, there is a higher risk of the service being perceived as unethical by the user/patient and hence a lower propensity for said user/patient to opt in to sharing their data

A good proxy here was Meta’s historical use of each user’s unique device ID called the Identifier for Advertisers (IDFA) to measure mobile in-app advertising effectiveness

While this approach may have resulted in more contextually relevant ads for users, it compromised consumer choice for growth (i.e., ad platform revenue)

As a result, it should be no surprise that the App Tracking Transparency (ATT) opt-in rate in the US is just ~20% after Apple started to require user consent to share their IDFA in April 20212

However, if the user & device data is benefitting the user/patient more than the corporation/provider, there is a lower risk of the experience being perceived as unethical by the user/patient and hence a higher propensity for said user/patient to opt in to sharing their data

While still early days, one infrastructure founder shared with me that an insurance-based customer ran an internal pilot that resulted in an opt-in rate of ~15%, which is below the ATT benchmark. This was a bit surprising given data-driven underwriting has the potential to lead to lower annual premiums (e.g., money saved), providing the member with a tangible benefit. However, this initial data seems to suggest that members perceive the privacy of their data to be more important/valuable than potential cost savings opportunities. While beneficial for both parties, I’d argue that the carrier’s actions (and communication of the program) may skew a bit self-serving in the eyes of the member.

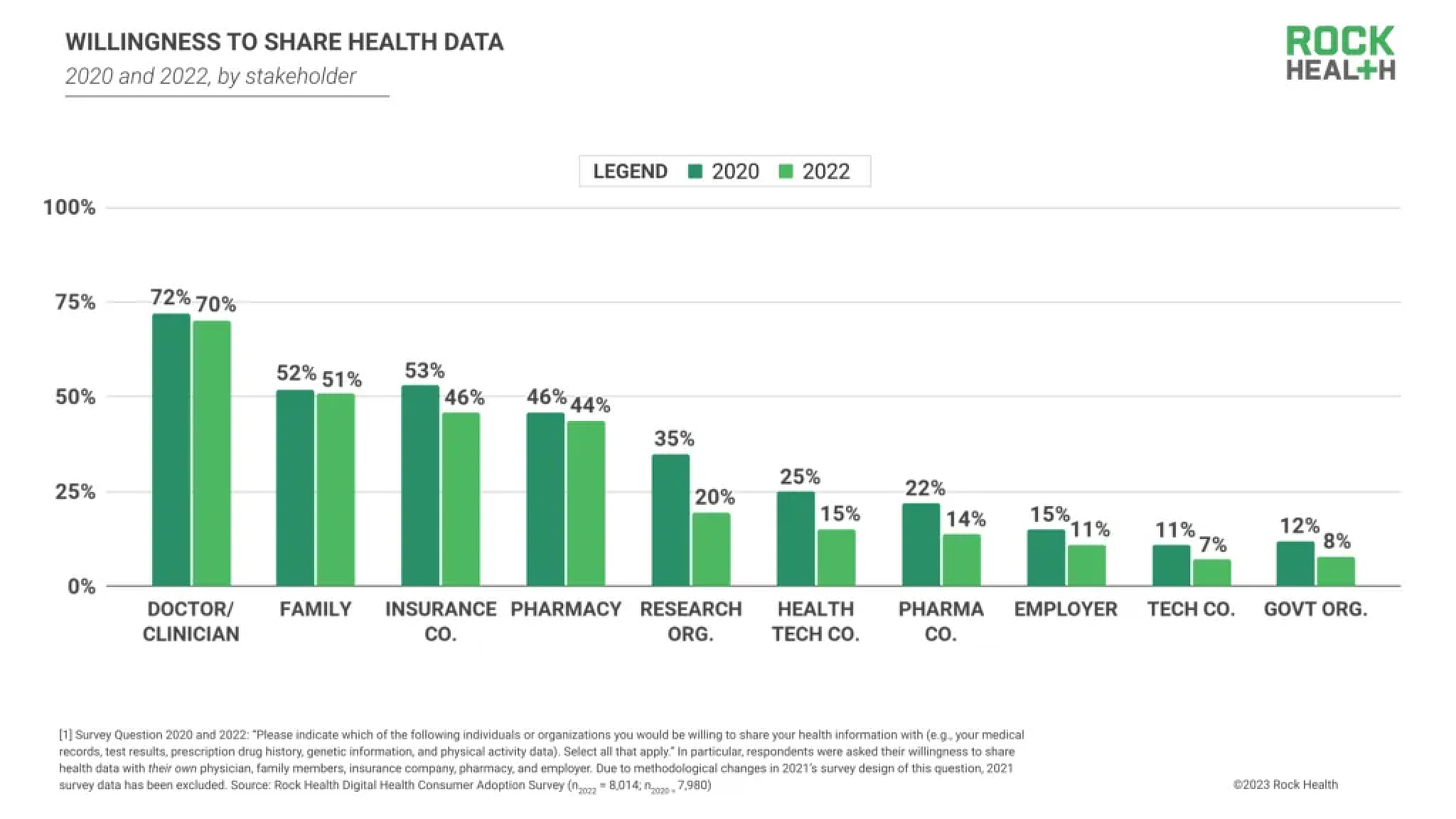

I’m most curious to see data sharing opt-in rates when said data is used to drive better health outcomes, which I believe carries the most positive perception amongst users (i.e., patient benefits more than the provider). While I was writing this piece, Rock Health released some timely survey data that was helpful in corroborating that view.

The results showed that a majority of respondents were willing to share their health data with their provider, but much more reluctant to share personal information to organizations whose incentives may conflict with or expand beyond their personal interests. This dynamic suggests that consumers are most motivated to share data when that exchange stands to directly improve their quality of care.3

Final Thoughts

While generative AI in healthcare is stoking the most enthusiasm in the space at the moment, the progress made within non-deep learning applications of NLP and ML over the past decade also presents a real lever to help transform care models.

As more companies start to apply these formative AI subsets to user & device data, it will be important to carefully evaluate the benefits and risks of these technologies and ensure that they are used in a responsible and ethical manner. This is especially important as the call for data privatization becomes increasingly louder.

In a perfect world, the various subsets that collectively fall under the AI umbrella will come together to help create care models that are truly tech-enabled, helping to drive efficiencies that are accretive to care margins and that allow providers to transition into more favorable at-risk arrangements.

I’d love to hear your thoughts on whether you’d allow passively collected data from your devices to be shared with your provider if it meant that the quality of your care could be improved. Feel free to comment below or DM me!

If you are building or investing in the healthcare or health & wellness space, I’d love to chat! Please reach out to jordan@nextventures.com, or I can be found here.

https://medinform.jmir.org/2022/8/e38943

https://www.flurry.com/blog/att-opt-in-rate-monthly-updates/

https://rockhealth.com/insights/consumer-adoption-of-digital-health-in-2022-moving-at-the-speed-of-trust/?mc_cid=4eb2bcee0a

In most situations where the terms were clearly outlined, I would opt into a data sharing agreement that intended to improve the quality of my care, for both acute and chronic conditions. However, my preference would be a scenario in which my data was used to refine preventative protocols.